We lint our code, format it, and run type checks, isn’t that enough? In 2025, the answer is increasingly no.

For years, tools like ESLint and Prettier have played a critical role in keeping codebases clean and consistent. Static analysis, the process of examining code without executing it has been our primary method for identifying issues and enforcing best practices. This spans everything from syntax and style enforcement, to type safety checks (using TypeScript), to detecting unused code, analysing complexity, spotting security risks, and applying framework-specific rules for libraries like React or Angular.

Traditionally, we’ve used a mix of tools: ESLint for enforcing coding standards, Prettier for formatting, and sometimes TypeScript for type-based analysis. But maintaining and configuring these tools separately can be clunky and slow.

But as frontend applications scale in size and complexity, and as teams grow more distributed, with the growing adoption of AI-assisted coding tools, we’re starting to hit the ceiling of what traditional linting can offer and static analysis becomes even more essential.

This new reality demands a toolchain that does more than catch syntax errors; it needs to understand context, enforce architecture, and operate at lightning speed. Fast, context-aware static analysis helps act as a safety net, ensuring AI-generated code aligns with project standards before it ever reaches production.

It’s no longer just about catching missing semicolons or flagging console.log. We’re entering a new era where static analysis is becoming: smarter, with type and context-aware checks, faster, thanks to Rust-powered tools and more integrated, by combining linting, formatting, and enforcement into unified pipelines.

We’re entering a new era where static analysis is becoming smarter, faster, and more integrated. Modern tools are rising that deliver significantly faster performance, deeper code understanding, and better integration into developer workflows.

What’s driving the shift in static analysis?

The first and most obvious reason is performance. Rust-powered tools like Biome and Oxlint run 10–20x faster than traditional JavaScript-based linters. CI pipelines get faster, and local developer feedback becomes instant. Benchmarks show Biome linting 1000+ files in under 500ms ( ESLint may take several seconds for the same).

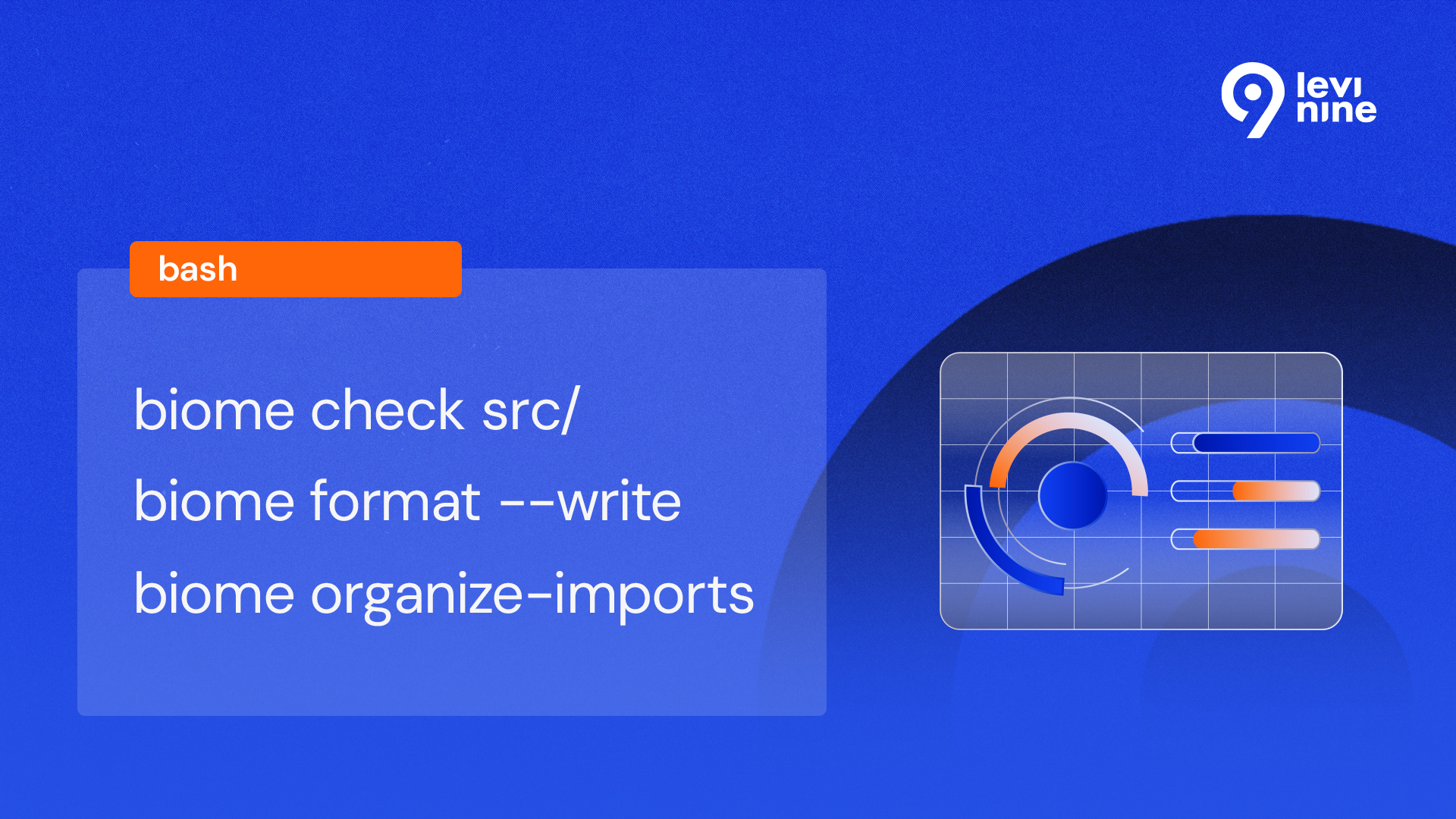

While both tools offer incredible speed, they solve the problem in different ways. Oxlint is designed as a high-performance, drop-in replacement for ESLint, perfect for teams wanting immediate speed gains without overhauling their existing configuration. Biome, on the other hand, is an all-in-one toolchain that replaces ESLint, Prettier, and more, ideal for teams wanting to simplify their entire stack with a single, highly-performant tool.

Another important advantage Biome brings to the table is unification. Instead of cobbling together (ESLint for linting, Prettier for formatting, custom scripts for imports, license headers, etc.) you can now use a single binary, like Biome, that handles everything with one config file and one CLI. This simplification can be especially valuable in monorepo setups or CI pipelines, where separate tools and plugins can create friction and version conflicts.

Last but not least, Semantic & Type-Aware Checks. Modern static analysers are built on (Abstract Syntax Tree) parsing and many can leverage type information. This allows for deeper, semantic insights into your codebase. With these capabilities, you can flag incorrect hook usage, enforce design system component usage, detect anti-patterns like deep prop drilling, check for missing or invalid internationalization keys as well as introducing custom rules that enforce patterns specific to their stack (e.g., blocking direct DOM access in a React app).

This level of architectural enforcement is precisely what’s needed to act as a safety net for AI-generated code, ensuring it adheres to project-specific patterns that an AI model may not be aware of. This kind of analysis goes far beyond what most ESLint plugins offer and lays the groundwork for more intelligent, maintainable frontend systems.

Real-World Examples: From quick wins to architectural deep dives

Let’s look at this case study, the quick win, boosting performance with Oxlint

- eval() usage,

- dangerouslySetInnerHTML

- unvalidated raw fetch() calls (optional – custom logic)

If you are using React, to enable these rules with ESLint, you’ll need to install eslint and eslint-plugin-react. However, be aware that performance can lag in large codebases, setup requires multiple plugins and configurations, and the toolchain remains fragmented unless paired with Prettier for formatting.

Oxlint offers a fast and seamless drop-in replacement for ESLint, fully supporting existing .eslintrc.json configurations without requiring any rewrites.

You can reuse the same rule setup:

Simply swap the CLI command to ‘npx oxlint . –fix’, and you’re ready to go. This gives you instant performance improvements with zero config migration, making Oxlint an ideal choice for teams looking to speed up linting without overhauling their toolchain.

How to Introduce this in your team ?

1. Pilot It: Start with a non-critical project or submodule, compare performance, false positives, and DX vs your current setup

2. Plan Gradual Migration: Oxlint supports ESLint config, making it the easiest entry point. Biome’s CLI can auto-generate configuration or help migrate formatting rules.

Try Biome on a small module and identify 2–3 complex rules to rewrite using its AST-based engine.

If ease of transition is your priority, start with Oxlint and migrate to Biome as your toolchain matures.

3. Integrate in CI/CD: Run linting in pull requests and CI jobs. Monitor performance, success rate, and team feedback. You’ll likely find that less time is spent debugging config, and more time is spent writing clean, confident code.

Final Thoughts

Today’s applications are larger, more dynamic, and developed by distributed teams working across monorepos, component libraries, and CI pipelines. In this context, static analysis it’s about empowering developers, enforcing architecture, and streamlining quality across the stack.

Modern tools like Oxlint and Biome are a response to this development. They address the limitations of legacy tooling by offering lightning-fast performance, even in huge projects, unified workflows that reduce maintenance overhead, smarter analysis, with potential for deeper, semantic rule sets and cleaner developer experience, with fewer moving parts and easier CI integration.

If you haven’t looked beyond ESLint in a while, now’s the time.

Static analysis is no longer just a background task, but it’s becoming a strategic layer in frontend engineering, shaping how we write, review, and ship code. Whether you adopt these tools today or in the next six months, the direction is clear: faster, smarter, and more unified tooling is here to stay. The future of frontend linting isn’t just about catching bugs, it’s about building confidence, clarity, and consistency into every commit.