Look at all those intricate, beautiful images of a flying car future generated by artificial intelligence. Spend some time reading a 10,000-word essay that an AI wrote on how humanity is on the brink of a technological revolution. Turn on some electronic beats, casually put together by an algorithm, as soothing background noise. Or, better yet, ask another AI to summarize the whole thing in five bullet points.

As you deepen into awe in front of this technological wonder, know this: you are looking backward in time. Everything AI says has already been thought of by someone. Everything it draws imitates another human drawing. Those harmonies are inspired by a human’s musical compositions.

AI can only do more of what humanity has already done. For now. This was one of the main conclusions of a recent debate on artificial intelligence held at Levi9. We gathered around a virtual table to discuss whether AI can truly create art if it will ever replace archetypal myths in human history, and if humans will ever reach a point where they no longer understand what they created.

AI can make us forget how everything works

After World War II, tribes from Papua New Guinea in the Melanesian islands would spend their days in wooden air traffic control towers, worshiping plane wrecks, and playing soldiers. They were part of what became known as the Cargo Cult. Their strange rituals were trying to entice the gods of the sky to keep dropping food, clothes, medicine, and weapons from their flying machines. They did not understand how all those supplies came to arrive on their island, dropped from planes by American or Japanese soldiers, and they were asking for more.

Codrin Băleanu, Engineering Lead at Levi9, thinks about this story when he weighs the potential dangers of artificial intelligence. “As technology becomes more abstract, we forget how to do the basics.”

His colleague, Călin Braic, Levi9 Recruiter, agrees and foresees a “first” in history. “In our history, every great loss of knowledge starts with a big disruption, with a tragedy. It might be a collapse, an invasion, an epidemic, a plague, or something of that sort. This time, the trigger might be our tendency to take knowledge for granted. And this would be a first in the history of mankind.”

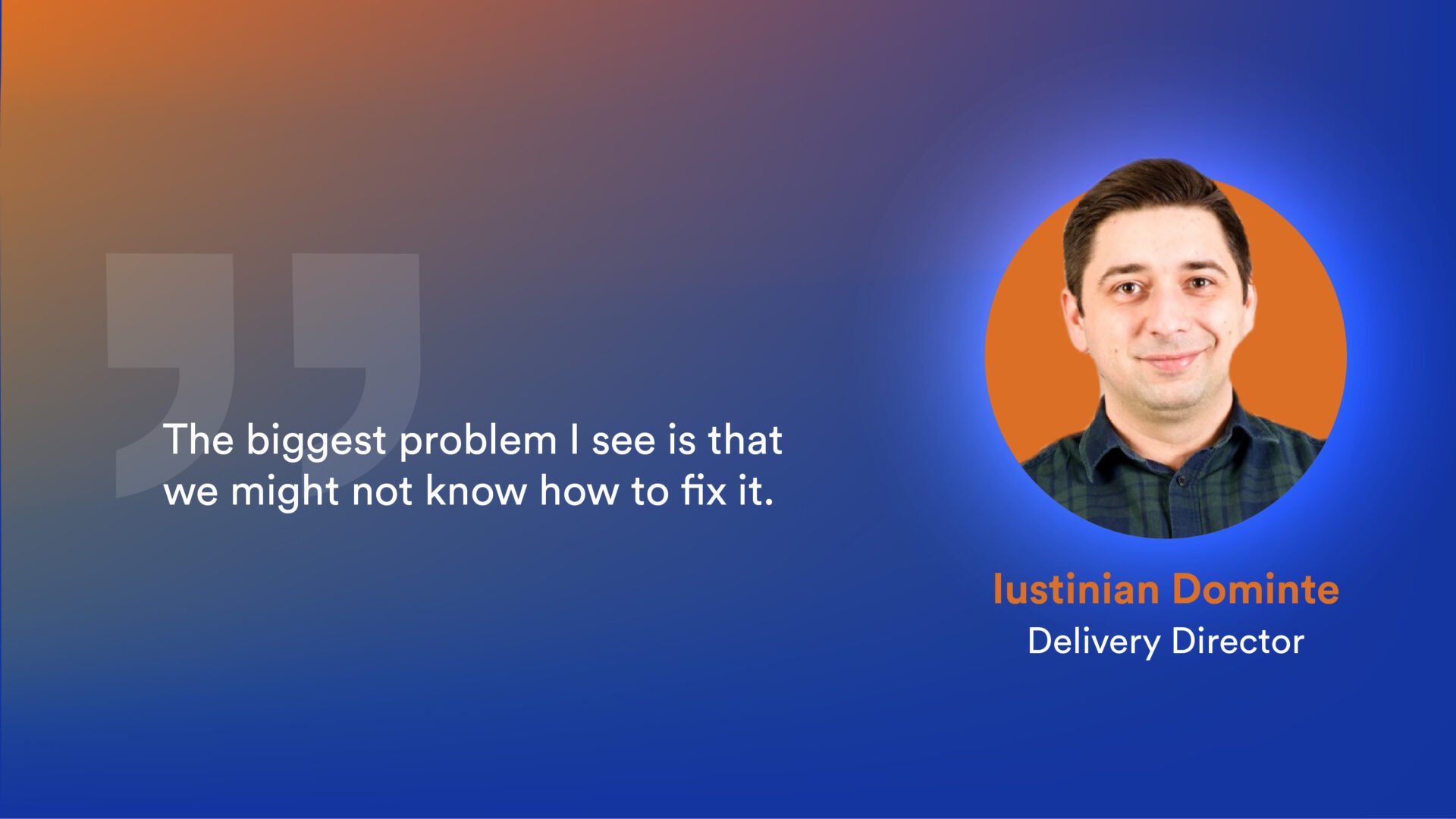

Software development already flows in that direction. More often than not, developers are defaulting to using libraries that make their job easier, without the ability to understand how they function. While shortcuts can make things simpler when starting out a project, things start to become complicated when something needs to be fixed. With new tools such as GitHub’s Copilot, this issue might be compounded. “We may end up using cogenerated pieces of AI code without knowing how to use them or understanding what’s inside.”

But are “easier”, “automatic,” and “premade” synonyms with forgetfulness? Levi9’s Talent Manager, Cristina Soos, does not agree. “Painters used to create and mix their own colors,” she argues. “Now they come in a box. Does this mean we don’t have art anymore?”

AI carries our own biases

And for that matter, can AI create art? “Even if AI can randomly come up with Monalisa,” says Eliza Enache, Data Engineer, “it’s not the same thing.” In Eliza’s view, the concept of art in itself has many layers. “One of the key concepts of art is that people look at it and perhaps understand different aspects, and they have different feelings. A person can look at Monalisa’s smile and imagine what they want.” Art is also layered and contextualized by the stories we tell ourselves about it, but it also contains a piece of its creator, making it unique.

Artificially made images, on the other hand, can only be variations on the five billion images that were used to train the neural network. “And about 80% of them are copyrighted,” points out Călin Braic, raising a stringent new ethical issue in the age of AI. “If a user is looking for an image of the Witcher fighting a dragon, nine times out of ten, they will find a design inspired by Grzegorz Rutkowski, one of the best-known video game illustrators. But his work was used without his consent. Such examples and many others make it difficult to morally defend AI-generated imagery.”

But there is a further, more nuanced issue. “If humanity’s past collective knowledge is used to generate new data, how do we make sure it’s not biased?” asks Codrin. Indeed, our past carries its own biases and limitations. For example, in 97% of the time it was asked to represent a CEO or director, the image generation AI tool DALL-E showed a white male. So, when using AI to create more of the same, all these issues continue to plague the output, whether we are aware of them or not.

“Different, but the same” is something typical of fairy tales and hero stories, the ones we keep hearing over and over at bedtime. At their core, they are retellings of different myths, archetypes of people, and cultural journeys. Now, with AI, you or your child can be the main character in such a story: just snap an image, modify it with Lensa to transform it into a story character, use Chat GPT to spit out a narrative, and you can get your own personal children’s book. “Cute, right?” smiles Călin. “But here’s the problem: Our bedtime stories had morals and life lessons.”

Eliza picks up the argument and continues, “The symbols in these ancient stories have become so deeply embedded into our collective being that you might use them as moral guidance when you are at crossroads in life.” So what happens when an algorithm starts to create them?

Opinions vary. Cristina Soos, for example, believes that humans are still in charge of meaning, no matter what the content generator says. “Meaning lies in our brains. It’s an individual decision what lessons it draws from a story — just think of our childhood stories. Take Grimm Brothers’ horror stories, for example. They were violent and told anecdotes about beheaded children and eaten grandmoms. However, when we went to sleep, what remained with us was the message that the good prevails and the bad will be punished.”

Intention is a muscle. Exercise it

It’s important to keep being in charge of meaning and decisions, even when it might be more comfortable to delegate them. “It happened throughout history. We delegated our decisions to priests, to tyrants, or to someone else in general. It requires less energy and humans are built to save their energy,” points out Cristina. “You might cede your power without realizing it.”

The difficult part will be to not let yourself get lost in the comfort of more and more automation, believes Eliza Enache. “With automation, there is a tendency to move further and further away from what is real. To go deeper into artificial things or let certain algorithms take over our decision-making power.” The solution? “We need to constantly exercise our intention, our power, our desire, our humanity. I think one has to deliberately practice and pay attention to not get carried away.”